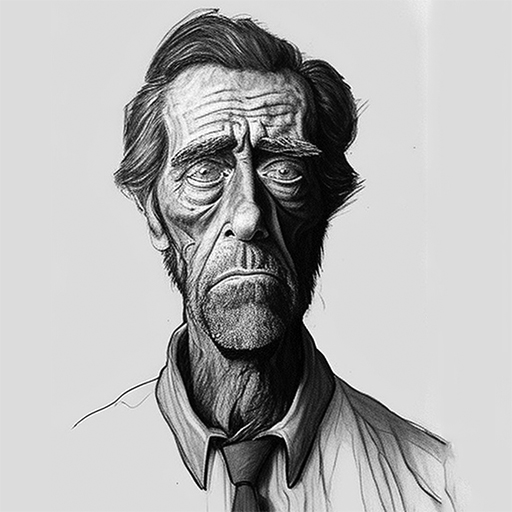

MS Lobotomized Bing Chat:

That's one way to fix it, I guess...

Cheers,

Scott.

Microsoft's new AI-powered Bing Chat service, still in private testing, has been in the headlines for its wild and erratic outputs. But that era has apparently come to an end. At some point during the past two days, Microsoft has significantly curtailed Bing's ability to threaten its users, have existential meltdowns, or declare its love for them.

[ FURTHER READING

AI-powered Bing Chat loses its mind when fed Ars Technica article ]

During Bing Chat's first week, test users noticed that Bing (also known by its code name, Sydney) began to act significantly unhinged when conversations got too long. As a result, Microsoft limited users to 50 messages per day and five inputs per conversation. In addition, Bing Chat will no longer tell you how it feels or talk about itself.

[...]

That's one way to fix it, I guess...

Cheers,

Scott.